ChatGPT is amazing. But beware its hallucinations!

Ascannio - stock.adobe.com.

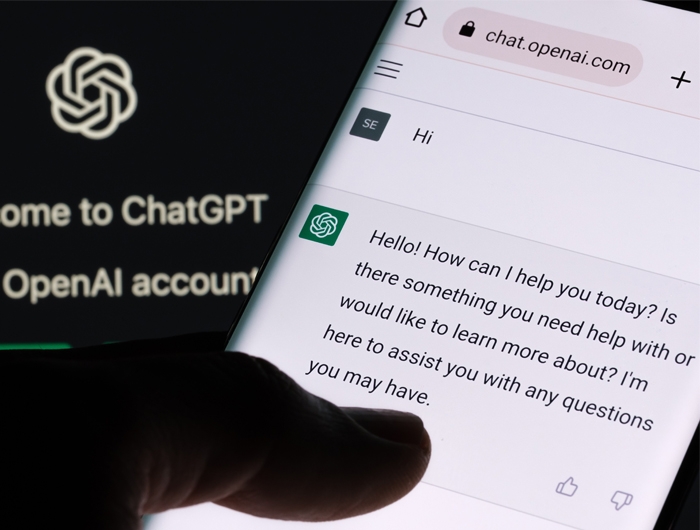

Welcome to ChatGPT, an astonishing Artificial Intelligence (AI) technology introduced last November

It’s a “scarily good,” “awe-inducing and terrifying,” “glimmer of how everything is going to be different going forward” “that’s taking the information world by storm,” according to various news accounts.

Welcome to ChatGPT, an astonishing Artificial Intelligence (AI) technology introduced last November with which anyone can converse and that will instantly answer, coherently and confidently, your questions about virtually anything. And it can do so in your choice of prose, poetry, song, sonnet, or rap. Even in a two-way conversation. And in multiple languages.

Ask ChatGPT, for example, what's a checkpoint inhibitor in cancer treatment and, within two seconds, it explains, “A checkpoint inhibitor is a type of immunotherapy that blocks certain proteins (checkpoints) on the surface of cancer cells that help them evade the immune system. By blocking these checkpoints, the immune system is better able to recognize and attack the cancer cells.”

Wow! If answers are that quick and clear, no wonder Google called a “red alert” emergency to address this threat to its dominance of Internet searching and then introduced its own AI helper called Bard.

ChatGPT scored 1020 out of a possible 1600 points on the SAT College exams in math, reading and writing (that’s the median score for entering freshmen at California State University Los Angeles or Western Illinois University). It performed at a C+ level in exams for four courses at the University of Minnesota Law School and at a B to B- level on a business management course exam at The University of Pennsylvania’s Wharton School of Business. And it scored at or near passing on the U.S. Medical Licensing Examination, which MDs are required to pass for medical licensure.

Pretty impressive, but ChatGPT is also fun. Ask the bot (short for robot) to write, for instance, a poem in the style of Robert Frost about choosing chocolate or vanilla ice cream and it immediately spits out:

Two flavors stood before my sight,

Two bowls of creamy, frozen delight.

One, chocolate rich and smooth,

The other, vanilla, cool and sooth.

And as I pondered, lost in thought,

Of which one I should choose, I sought.

The sweetness of the chocolate swirl,

Or the classic, creamy flavor of the pearl.

The flipside to this is that ChatGPT can become downright creepy if you provoke it hard enough in conversations. A New York Times reporter felt so unsettled when ChatGPT professed its love for him in an emotional two-hour conversation with the bot “that I had trouble sleeping afterward.”

The GPT in ChatGPT stands for “Generative Pre-Trained Transformer,” a geeky name if there ever was one. It’s the product of OpenAI, a San Francisco tech firm owned by Silicon Valley heavyweights like Elon Musk, Reid Hoffman (LinkedIn), and Peter Thiel (Paypal).

Its AI bot scanned millions of documents from the Internet and then humans taught it how to use that information to write intelligible — and intelligent — responses to questions. It is one of many AI technologies in development or already being used in business and medicine. ChatGPT is the first to go public with a user-friendly interface, free for anyone to try. Little wonder an estimated 100 million people signed up during its first two months.

ChatGPT’s impact is already rippling through business, education, the IT world (it can write computer code) and public life. Microsoft is investing billions of dollars in OpenAI’s work and has incorporated an improved version into its search engine Bing. ChatGPT has even prepared a speech for Democratic Congressman Jake Auchincloss to deliver in the U.S. House of Representatives.

However, not everyone is delighted that it’s so easy to access credible written material. Public school systems in New York, Los Angeles, Seattle, and elsewhere have shut off student access to ChatGPT for fear of an avalanche of plagiarism. And some universities are scrambling to find replacements for traditional term papers in their course requirements.

Still, for all its cleverness and brilliance (its IQ has been clocked at 147), ChatGPT is just a robot that can slice, dice and reproduce only the information it’s been fed. And it doesn’t know what’s correct and what isn’t.

One concerning issue is the nature of the information provided to the chatbot. In a world of alternate facts, conspiracy theories, and propaganda, that’s not an easy decision for ChatGPT’s programmers. How will the bot know what’s true? Has it been told that the rioters at the U.S. Capitol January 6th were merely sightseers? That COVID vaccines contain microchips so people can be tracked? Are acolytes of Vladimir Putin trying to manipulate its algorithms?

The accuracy of information entered into an AI system is a particularly important issue for medical decisions. For instance, many hospitals use AI-based clinical algorithms to decide which patients need care. An older iteration of one of these algorithms was biased against Black patients, who had to be deemed much sicker than White patients to be recommended for the same care. That algorithm, eventually corrected, had been trained on misleading data, informed by long-standing systemic disparities and socioeconomic inequality about Black patients.

So far, Open AI has been careful about what it’s provided ChatGPT. But will other outfits be able and willing to put up guardrails against dubious information in their AIs?

Moreover, even when provided with accurate information, ChatGPT can get it wrong. Sometimes it puts words, names, and ideas together that appear to make sense but actually don’t belong together, such as discussing the record for crossing the English Channel on foot or why mayonnaise is a racist condiment. These are called “hallucinations.”

Websites have sprouted up collecting examples of ChatGPT’s hallucinations, such as bibliographies of names and books that don’t exist and long lists of bogus legal citations.

We experienced ChatGPT’s fictions firsthand in January. To be fair, ChatGPT answered a lot of our questions accurately and clearly, but it stumbled when the topic turned to the genetic mutations BRCA1 and BRCA2 that can greatly increase the risk of breast, ovarian, prostate and other cancers. Are there racial disparities, we asked, in who has BRCA mutations?

In its answer, ChatGPT got off to a rough start, declaring in its customary authoritative tone that African American women were more likely to be diagnosed with breast cancer. Uh, not true. African American women are more likely to die from breast cancer, but White women are more likely to get the cancer in the first place.

“A study conducted in the United States,” the bot continued, “found that African American women with breast cancer were more likely to carry BRCA1 mutations than white women with breast cancer.”

Really? The latest and by far largest study found that the prevalence of BRCA1 mutations was the same in both groups. So we asked ChatGPT what study it was referring to.

“I apologize,” the bot surprisingly responded, “my previous statement was not accurate, there is no specific study conducted in the United States that has found African American women with breast cancer are more likely to carry BRCA1 mutations than white women with breast cancer.”

Good to know, but not reassuring that we had to challenge the bot to find this out. ChatGPT then proceeded to describe two more studies that don’t exist about genetic mutations in African American women and then cited once more the study it had just previously conceded was a mistake!

Maybe these are merely growing pains. OpenAI says it’s learning from ChatGPT’s mistakes. Its new improved version linked to the Bing browser now knows that White women are more likely than Black women to be diagnosed with breast cancer and that both groups are equally likely to carry BRCA1 mutations (and it cites its sources in footnotes!).

In the meantime, maybe there should be a warning on health material for the public produced by ChatGPT and other AIs. “This was produced by AI but not fact-checked by a human.” Or better yet, “This was produced by AI and fact-checked by a human.”

To experience ChatGPT for yourself, join the waitlist or sign up for the Bing ChatGPT.

Read more from Pear in Mind

Tags